Live the Story: Why Gaudio Dub is the Answer

Introducing Gaudio Dub through real dubbing projects and what we learned from them

Gaudio Lab brings a tremendous range of technologies to bear on a single piece of content. DME separation, voice replacement, holistic content analysis, language localization, voice casting, emotion mapping, mixing and mastering — we are an AI tech company that has won six CES Awards over four consecutive years!

Yet, this post is less about the technology and more about the real dubbing projects we have taken on — and the hard-won know-how we have built along the way.

Do you prefer subtitles or dubbing when watching foreign-language content?

Every piece of content has something the viewer needs to focus on. In a film, it is the actors' expressions. In a sports or esports broadcast, it is the action itself. Yet most people are still more accustomed to subtitles than dubbing. The problem is that a viewer reading subtitles is only half-watching the screen. In the very instant an actor's face contorts with emotion, their eyes are chasing text at the bottom of the frame. Dubbing gives those eyes back to the screen.

This is exactly why global OTT platforms are looking beyond subtitles to dubbing — they understand the value of letting viewers live the story while watching the content.

We live in a world where AI generates images and creates videos. Now it does dubbing, too. But whenever the topic of AI dubbing comes up, the reactions tend to sound similar

"AI dubbing? How is that any different from just running TTS?"

"Sure, it's fast — but the quality… I've listened to a few and honestly, it's not there yet."

Given the quality of most AI dubbing services on the market, these reactions are understandable. Upload a video, press a button, get a result. Fast and convenient. But anyone can tell it was generated by AI. For a knowledge-based YouTube video, that might be good enough. But demand for dubbing is rapidly spreading to short-form content, variety shows, films, dramas, and beyond.

Fast delivery. High quality.

So — can AI dub an entire drama? At broadcast-ready quality?

With today's technology, that might sound impossible… but as always, Gaudio Lab found a way. And proved that speed and quality are not an either-or proposition. Here is how we achieve AI-level speed without compromising broadcast-level quality, illustrated through several real-world cases.

Different content demands different dubbing

Gaudio Lab has dubbed films (romance, courtroom thrillers, campus dramas, horror…), dramas (from romance to over-the-top melodramas), kids' content, variety shows (cooking, mukbang, survival competitions), documentaries, sports and esports broadcasts, animation, corporate videos, dating shows — and more.

The conclusion from all of this work: when the content is different, the dubbing must be different, too. Every genre has its own appeal — and its own hidden challenges. When people ask about AI dubbing, the question is usually "What can the technology actually do?" But once you are in the trenches, the real question becomes "Do you understand what matters most for this specific content?"

Let us walk through a few of those projects to show what we focus on, genre by genre.

Horror Films — AI-Generated Screams… Not Scary at All!!

What makes a horror film terrifying is not just the ghost on screen — it is the sound. The slow creak of a door, the wind howling outside a dark window, a scream… (Personally, the thing that scares me most is the sound of someone holding their breath the moment they sense something is coming.)

So how does an AI-generated scream sound? I cannot watch horror films at all, but when I watched one with AI dubbing, it was surprisingly… not scary. The voices were unnatural enough to break immersion entirely.

This is where humans come in. They do not record the lines themselves, but they guide the AI to generate screams that are genuinely frightening and realistic. Through Gaudio Lab's proprietary emotion mapping expertise, we ensure the AI voice delivers sounds that closely match the original.

Music Survival Shows — 100 Contestants…? How Do You Tell My Bias's Voice Apart?

When we took on a large-scale music survival show, the first challenge we hit was the sheer number of people. A hundred contestants, plus MCs and judges… How do you make each voice distinguishable? That was the core problem.

Simply varying vocal timbre was not enough. No viewer can tell 100 people apart by tone alone. And with that many cast members, the person speaking is not always the person on screen.

So we used our AI voice casting technology to define each character's speech profile — speaking pace, habitual filler words, sentence-break patterns — creating 100 distinct, personality-rich voices. We paid extra attention to the MCs and the contestants who survive to the end, because their voices carry through the entire series.

K-Drama — Recreating the Creator's Intent, Frame by Frame

Drama is the most demanding category. The dubbed version must align almost perfectly with the creator's original intent, and lip sync must be matched frame by frame. The dubbed voice has to land precisely when the actor's mouth opens and closes — but since speech length and rhythm differ fundamentally across languages, achieving this sync is a formidable technical challenge.

The timing of the mouth opening on the original line "거짓말 하지마" has to match the mouth movement on the dubbed line "Stop lying" for it to feel natural — and frankly, that is an extremely difficult problem to solve. For select titles where likeness rights and other clearances have been secured, we have even employed lip-motion technology for the English dub.

On top of that, simultaneous multilingual dubbing amplifies the importance of voice casting per language. Even for the same character, the English and Japanese voices each need to feel natural to their respective audiences — so native-language specialists review everything down to vocal tone.

Esports Broadcasts — Accurate Translation and Natural Commentary Voice Are Everything

What we learned from dubbing esports tournament broadcasts is that translation accuracy matters just as much as the dubbing itself. If game terminology, strategy breakdowns, or real-time play-by-play descriptions are off, viewers notice immediately. The gaming community is extremely sensitive to translation errors. That is why we start by building a rigorously vetted glossary at the translation stage.

At the same time, the transition between a caster screaming with excitement and calmly breaking down a play needs to sound natural. If the voice is tuned only for shouting, it feels off during calm analysis — and vice versa. Maintaining consistency in a single person's voice across excited and composed moments: that is the core challenge of esports dubbing.

(If you're curious about AI translation, one of the steps in Gaudio Lab's dubbing process, please check out this post!)

So you don't just ship the raw AI output as-is?

No. Honestly, for most content, the answer is "not yet." There are areas that AI dubbing still cannot handle on its own. Beyond the examples above:

Emotional nuance is lost. A single line like "Take care" needs to be choked out, barely held together, if the character is fighting back tears — but cold and resolute if they are severing a relationship in anger. AI can express broad categories like "sadness" or "anger," but it cannot yet capture the subtle variations within the same emotion.

Rhythm becomes uniform. Humans naturally pause just before an important word, speed up as emotion builds. AI struggles to reproduce this organic irregularity, so long monologues or emotionally complex lines can end up sounding like a machine reading a script.

Non-verbal vocal nuance is missing. A sigh before a monologue, laughter woven into dialogue, a scream layered over a shout. The difference between speaking while laughing and laughing mid-sentence — AI still cannot nail that from the start.

"Not everyone can do this — that's exactly why we should!"

The list of AI dubbing limitations could go on and on, which naturally raises the question: "So can you even use AI dubbing at all?" But we chose to focus precisely on those limitations. If it is hard, that means not just anyone can do it — and Gaudio Lab happens to be a place full of wonderfully… unusual people who get excited when they find a hard problem to solve.

When a new challenge had us scratching our heads, one colleague said: "Not everyone can do this — that's exactly why we should. Let's figure this one out together."

The approach is clear — don't force AI to do what it can't

So how did Gaudio Lab solve the problem? We drew a precise line between what AI does well and what humans do well, then built a structure where each step runs in parallel. And, the goal is zero compromise on speed or quality — and localized content tailored to the distinct needs of each industry and content type.

For example, in the Voice Casting stage:

-

AI handles content analysis, character profiling, and voice generation.

-

Humans review the auto-generated voice options and select the best AI voice.

Once voices are cast and Dubbing begins:

-

AI generates all dialogue in the target language in one pass.

-

Humans review each scene to refine emotional delivery, lip sync, audio quality, and localization — polishing the final output.

Knowing exactly where AI falls short — HITL

This is why we do not rely on AI alone. We operate a HITL (Human-in-the-Loop) structure with professionally trained AI dubbing producers and language specialists. The key is that humans are not creating everything from scratch — they are completing what AI has rapidly drafted.

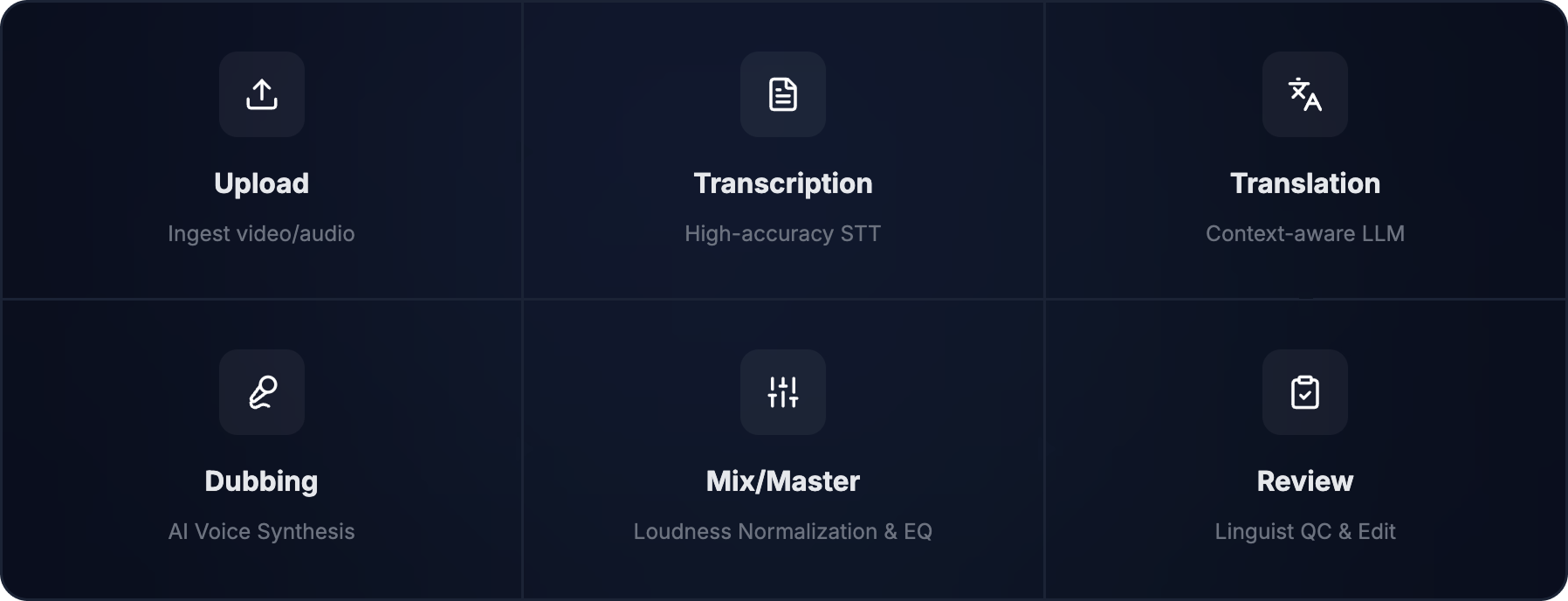

To maximize speed, we also transformed the traditional sequential dubbing workflow into a parallel one. While translation is underway, character voices are already being generated. Translation review and AI dubbing run simultaneously. The entire pipeline operates within a single platform — GSP (Gaudio Studio Pro) — eliminating the friction of switching tools or converting files.

This is not just about working faster. It is about whether you can enter the global market ahead of the competition — whether you can launch simultaneously while a title is still generating buzz. It is about seizing the golden window of content localization.

In closing…

What we do is not simply converting text into sound. It is carrying the emotion, atmosphere, character dynamics, and genre density of the original work into another language.

And all of it is possible because AI and humans work together.

DME separation preserves the original audio without degradation. AI Voice Cast designs voices that fit both the character and the target audience. Emotion Mapping transfers the texture of emotion. HITL fills in the judgments AI cannot make. Content-specific pre-production sets the direction before work begins. And our collaboration with professional sound studio Wavelab delivers cinema-grade mastering.

All of these processes run on a single pipeline: Gaudio Studio Pro.

Experience-driven, content-specific dubbing. Gaudio Lab's full-stack AI dubbing delivers the experience of total immersion.