How to Resolve Music Copyright Issues in Global OTT Distribution

K-content exports and global distribution are growing at a rapid pace. Netflix, Disney+, Amazon Prime — simultaneous global releases of Korean dramas and variety shows have become commonplace. Yet in the day-to-day reality of international content distribution, "music copyright" issues frequently become a stumbling block.

This post explains why music copyright becomes a problem during content exports, how the industry has traditionally dealt with it, and how the AI-powered music replacement technology in Gaudio Lab's GSP (Gaudio Studio Pro) is changing the equation.

Why Music Copyright Becomes a Problem When Content Goes Global

Music copyright requires different licenses depending on region, usage type, and a range of other criteria. Even if a piece of music has already been cleared for domestic broadcast, a separate set of rights must be secured when that content is streamed on overseas OTT platforms. In other words, "domestic broadcast rights" and "international streaming rights" are entirely separate matters. Domestic terrestrial broadcast rights, domestic OTT distribution rights, and international streaming rights each fall under different contractual territories.

Here are some real-world examples of how music copyright issues play out in practice:

-

A documentary production company tried to sell its content to an overseas OTT platform, but was unable to secure international streaming rights for the music used — and was forced to cut entire scenes as a result.

-

A variety show exported to Taiwan saw royalty costs exceed its export revenue, creating a net loss on the deal.

-

A YouTube creator used background music in a sports highlight reel, only to have Content ID automatically redirect 100% of the video's revenue to the original rights holder.

These are not edge cases — they are challenges faced by a wide range of content producers and rights holders. As K-content exports continue to grow, music copyright clearing has become a mandatory step in every content pipeline.

What Global OTT Platforms Require

Global OTT platforms hold content to a high delivery standard. Rather than simply accepting a single finished video file, they typically require separate track deliveries: M&E (Music & Effects) or D/M/E (Dialogue/Music/Effects) splits, in which dialogue, music, and effects are delivered as distinct tracks.

Why are split deliveries necessary?

-

Multilingual dubbing: Only the dialogue track needs to be swapped out (original language → dubbed language), while music and effects are preserved.

-

Music replacement: If a particular music track carries copyright issues, that track alone can be extracted and replaced.

-

Local regulatory compliance: Different countries may require different music to be removed or replaced.

In addition, platforms often require a Music Cue Sheet — a document listing every piece of music used in the content, including track titles, composers, publishers, timecodes, and usage types. Cue sheets serve as the basis for royalty accounting.

In short, successful international delivery requires all of the following:

-

Music copyright clearing or replacement

-

D/M/E split tracks

-

Music cue sheet

There is no shortage of hurdles to clear before a single drama or variety episode can be exported. Korean content comes with its own added complexity: music used in Korean productions is frequently licensed only for broadcast purposes, meaning all of it must be replaced before export. Given the time and cost involved, resolving music copyright issues has become one of the biggest friction points in K-content distribution.

How the Industry Has Traditionally Handled It

Three approaches have traditionally been used to address music copyright issues:

-

Secure new international licenses: This means negotiating additional contracts for international streaming rights on a song-by-song basis. Theoretically the cleanest solution, but it requires individual negotiations for each track, makes cost forecasting nearly impossible, and can take weeks just to clear the rights for a single episode — which may feature upwards of 20+ songs.

-

Delete the affected scenes: Simply cutting the scenes that contain unlicensed music. Fast, but it damages the integrity of the content. In scenes where music is integral to the storytelling, removing it can fundamentally alter the emotional impact and undermine the original creative intent.

-

Manual music replacement by a sound engineer: A sound engineer separates the music from the original mix and manually replaces it with royalty-free tracks of a similar feel. This produces the best quality results, but a single 60-minute episode can take two to three weeks or more. For a drama airing two to three times per week, this approach is simply not feasible.

All three methods share the same core problems: slow, expensive, and compromised quality. In a world where K-content is being delivered to global platforms on a weekly basis, these approaches run headlong into the speed demands of modern content distribution.

How AI-Powered Music Replacement Works

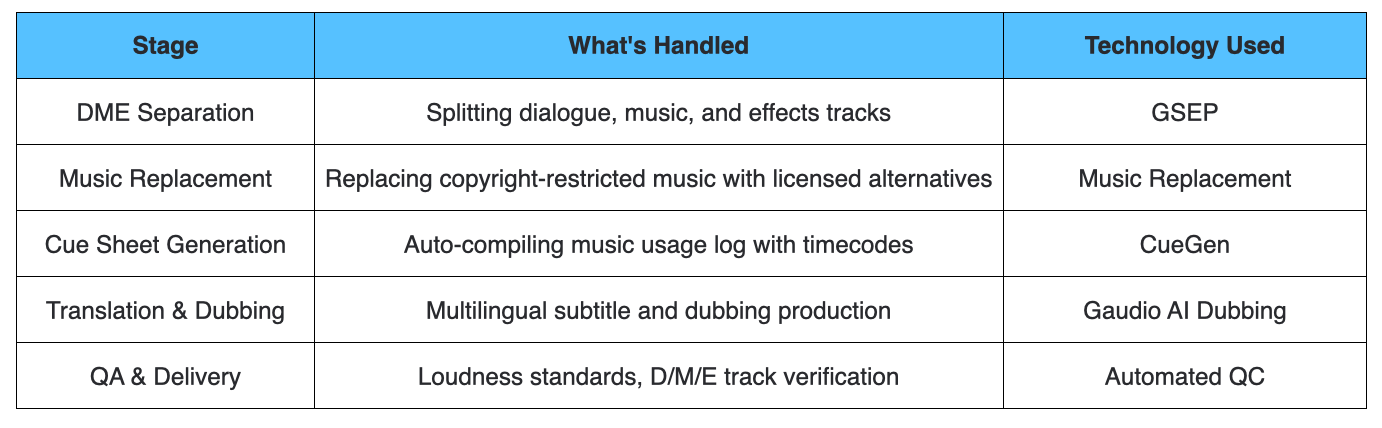

So how does AI-based music replacement actually work? The process unfolds in four stages.

Stage 1: DME Separation — Isolating the Music

AI automatically separates the original audio into Dialogue, Music, and Effects tracks. This step relies on GSEP (Gaudio Source SEParation), Gaudio Lab's proprietary technology and one of the highest-performing source separation systems in the world. The dialogue and effects tracks are preserved as-is, while the music track is extracted separately for replacement.

The quality of the separation is everything here. If dialogue gets smeared or effects are lost in sections where dialogue and music overlap, even perfect music replacement cannot save the final output quality.

Stage 2: Music Identification — Mapping Every Track

Individual songs are automatically identified within the separated music track. Even when a single 60-minute variety episode contains 100 or more songs, the system can extract a full music cue sheet — including start and end points and track metadata for every cue. The output is in an industry-standard format compatible with broadcasters, OTT platforms, and regulatory requirements worldwide. A music recognition API powers this stage and simultaneously feeds into automatic music cue sheet generation.

Stage 3: Similar Track Matching — Finding the Right Replacement

For each identified track, the AI recommends replacement candidates with similar mood, genre, instrumentation, and energy level. Rather than simply matching by genre, the system converts music into multidimensional vectors and computes similarity scores — ensuring that recommendations stay true to the scene's context. For an article about the process by which AI finds similar music, please refer here.

Specifically, the following elements are compared:

-

Genre and mood: Ballad, tension, comedic, and so on

-

Instrumentation: Solo piano vs. full orchestra

-

Tempo and energy: Original BPM and volume dynamics

-

Structural progression: Intro → build → climax arc

GSP's premium library of over 110,000 tracks consists of high-quality, fully licensed music created by real musicians — not AI-generated content. This ensures the replacement music can genuinely honor the original creative intent.

Stage 4: Remixing — Blending It All Together

When the replacement track is combined with the original dialogue and effects, the system preserves the volume envelope of the original music — ensuring the replacement follows the same dynamics. If the original music was a quiet underscore beneath dialogue, the replacement will match that same level. If the music swelled at a climactic moment, the replacement follows the same curve. This is called envelope preservation.

After the final mix, a professional sound engineer reviews the output. It's a hybrid workflow: AI handles the heavy lifting quickly and accurately, while a human checks the final quality — ensuring a premium result every time.

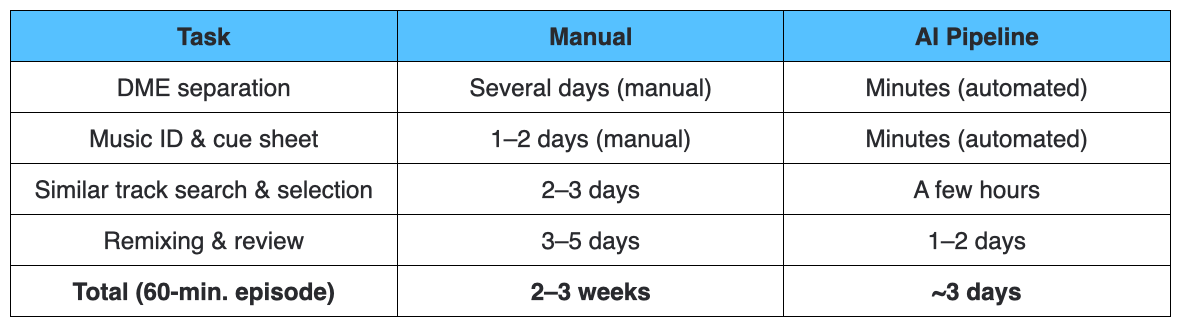

How Much Faster Is It?

Introducing the AI pipeline dramatically compresses delivery timelines compared to manual workflows.

*Timelines may vary depending on content.

For a show airing two to three times a week, manual replacement simply cannot keep pace with the broadcast schedule. GSP's pipeline makes real-time delivery in sync with air dates a reality — compressing a process that once took roughly a month down to about three days.

Quality Matters Too

A replacement track will never be a perfect replica of the original. Directors and music supervisors make deliberate, intentional choices when selecting music for a scene, and no replacement can fully replicate those intentions. That said, what matters most in a practical content export workflow is not "identical reproduction" — it's "maintaining the viewing experience."

GSP takes the following factors into account as the core determinants of AI matching quality:

-

Precision of segment boundaries: Accurately capturing the exact start and end of each cue. A misread boundary on a fade-in or fade-out creates jarring transitions.

-

Preserving directorial intent: Evaluating mood and energy match with high fidelity. A comedic cue dropped into a tense scene collapses the emotional architecture of that moment.

-

Seamless mixing: Ensuring the replacement track integrates naturally with the dialogue and effects tracks — not just swapping in a new song, but mirroring the original volume dynamics to eliminate any sense of artificiality.

Other Challenges in International Content Delivery

Music copyright is just one of several obstacles to clear for a successful international release. Full localization requires an integrated pipeline that encompasses music replacement and much more:

Music Copyright Is the Bottleneck Holding Back K-Content's Global Reach

The creative strength of K-dramas and K-variety shows is beyond question. Global OTT platforms are actively acquiring Korean content and building dedicated K-content hubs within their platforms — demand continues to grow.

But no matter how good the content is, it cannot cross borders if music copyright issues remain unresolved. And as long as that process depends on manual workflows, the pace at which K-content can be delivered internationally is structurally constrained.

Music replacement through GSP is the key technology that breaks this bottleneck. By automating the full pipeline — DME separation → music identification → similar track matching → remixing — through AI, GSP makes "localized content delivery at broadcast speed" a reality.

Our mission is to keep pushing the boundaries of content export, so that a great piece of content can reach as many markets as possible and contribute to a more diverse revenue picture.

"Making great content is important. Making it possible for that content to cross borders is equally important."