The Future of VR Audio – 3 Trends to Track This Year

The Future of VR Audio – 3 Trends to Track This Year

2017 has pushed the VR industry forward in countless ways, including the recognition of audio as an absolutely critical element in VR experiences. Here are a few trending efforts that creators are using to push the envelope with sound.

The industry will embrace object-based audio for every kind of experience.

Utilizing object-based audio also gives more creative freedom to content creators since it’s easier to manipulate post-production effects on a single sound — think of it as a single raw element as opposed to a big, messy sound glob. In addition, object-based audio works perfectly for 6DOF (six-degrees-of-freedom) VR content, which is rapidly growing in popularity.

6DOF content is just like a game — the character moves around within the space in every direction and has the agency to interact with objects in the environment. When the character does either of these things, the sound needs to change accordingly. Because it is better at pinpointing sound and easily reflecting the changes during gameplay, object-based audio has actually already been used in 3D game engines for quite some time. As more 6DOF content is being built on game engines, it’s plausible that more audio engineers will be forced to learn how to mix and master sound in game engines rather than their traditional Digital Audio Workstations.

Quality VR content will be published with more players embracing spatial audio.

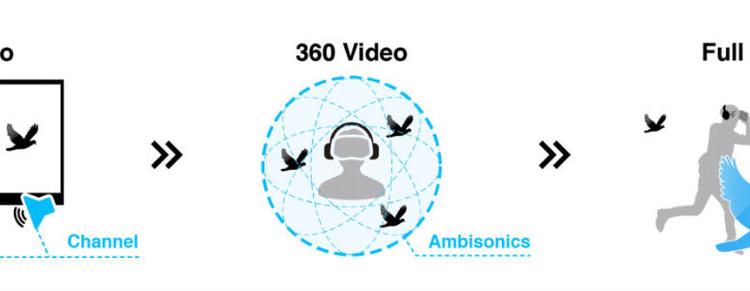

These limits on publishing platforms have discouraged content creators from fully embracing spatial audio in their productions. Still, as sound is met with increased appreciation, renderers or players will eventually have to support spatial audio. When Vimeo launched Vimeo 360 in March to support 360 content, a huge amount of the requests from users involved a desire for a spatial audio feature and the official help page states that they are planning to support spatial audio in the near future. Smaller players and platforms will follow the path laid out by Facebook, YouTube, and Vimeo. As user standards for VR content quality continue to rise, adoption for spatial audio will race to keep pace.

Out of the many reasons that have kept content publishing platforms from adopting spatial audio, the primary one has been the absence of a dedicated VR audio format along with a compatible renderer. With more emphasis being put on object-based audio, Ambisonics alone will be phased out as the standard format of the future.

Creators will push beyond post-production to create new listening experiences.

Sound is not just a storytelling cue that can be used to encourage VR users to look in a certain direction. In some new use cases, you are actually able to hear certain sounds over others within the same experience if you want. The following recorded 360 video, for example, lets users hear what they are looking at more clearly than the other instruments placed all around them.

This new wave of sound won’t just be part of an evolution of current techniques. In many cases, it will give way to revolutionary new forms of entertainment. The virtual canvas for artists is expanded 360 degrees horizontally and 360 degrees vertically beyond the physical dimensions of a stage in real life. Musicians will now be able to play with “virtual location,” along with their traditional considerations of pitch, loudness, and timing. They’re also learning how to exploit human auditory perception to influence these experiences at an even deeper level. Some psychoacoustic principles that you have already experienced in real life can be taken advantage of in VR to make each experience different at the individual level. While there is a whole lot to consider in that realm, our collective knowledge about it continues to grow.