What’s Next For VR Audio

What’s Next For VR Audio

Where VR Audio is At

Before the age of VR, the 2D video story was not influenced by end-user’s interaction. The spatial resolution for audio improved just by adding more speakers around the end-user’s frontal rectangular screen. The biggest hurdle for immersion was instead ‘present room effect.’ One could never fully be there in the story because the virtual world was limited by the screen size. This is now a different story because the presence of the real world is blocked by wearing HMD and headphones. VR certainly helps the content consumer be completely transported to a different world, but again it delivers different levels of immersiveness depending on type of content.

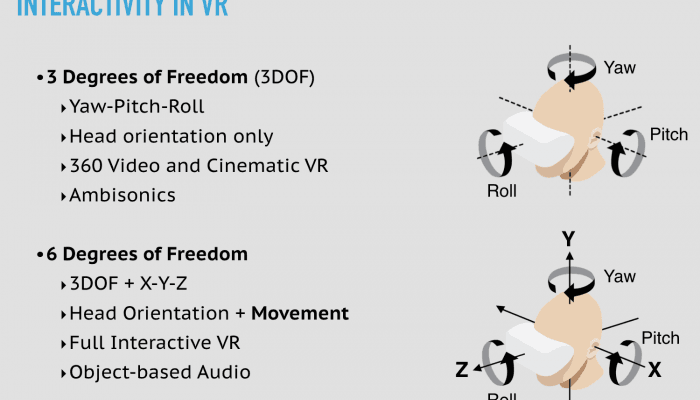

In 360 video or 3DOF type content, the world is already pre-rendered. The three dimensional space is projected in a spherical world that you can look from different directions upon free will, but cannot walk around. Your position remains fixed in one spot. This is why an Ambisonics audio signal, a way of recording and reproducing 3D sound as a snapshot, became such a popular audio format for 360 videos. Just like the 360 video, this spherical audio format can be easily rotated to reflect head orientation yaw, pitch, and roll. However, Ambisonics is limited to 360 type content only, where the end-user is fixed at one position. Increasing the order of Ambisonics does not support greater interactivity or 6DOF, but merely increases the spatial resolution. Think of it as how increasing the pixel resolution doesn’t transform 360 video into walkable video.

Meanwhile, full VR or 6DOF content is rendered in real time while the user interacts and moves around in the scene. This requires the objects in the scene to be controlled individually, rather than as a chunk of pre-configured video and audio. When each sound source is delivered to the playback side as an individual object signal, it can truly reflect both the environment and the way the user is interacting within the environment. This full control capability of object-based audio may be used in 2D or 360 video, but it’s potential is best realized in full VR.

VR Audio Moving Forward

While more and more VR content is being made in the full VR format, the audio industry is barely catching up with Ambisonics signals for 360 videos. Second order Ambisonics already requires a minimum of 9 channels, and higher order Ambisonics are not feasible in many cases because the network bandwidth is limited in mobile, not to mention the restrained processing power allocated for audio.

Some might argue personalized audio is the most important challenge going forward. Until capturing the exact anthropometric information requires quite a bit less resources than now, customization for each person’s ear shape and head size will remain as the last step to perfection. Luckily, four out of five people can already feel immersed in the VR scene with general binaural rendering process. What needs to be figured out in the foreseeable future is how to deliver interactive 3D audio without compromising the content quality, from creators to consumers and across multiple platforms. Once best practices are determined and a recommended workflow is set, standardizing those practices should follow to improve interoperability.